Vision

Article curated by Ginny Smith

Vision is a key human sense – we use it to spot danger and navigate our environment. Despite this, there is still plenty we don't know about how this sense works – both in us and in other animals. In the future, this understanding will be vital to create robots that can see and analyse visual information.

There is a huge amount we don't know about the human sense of vision. As we seek to develop better and better computational vision, we identify more unknowns. For example, the ability to recognise a blurry face despite the loss of detailed information suggests we must commonly rely on low resolution data, using our memories to fill in the blanks. We also don't fully understand the way in which our brain'cancels out the effects of blinking, eye movements, and the fact that only a tiny region of the retina can see in high resolution to provide a seemingly static and clear image of the world.

Learn more about Vision.

2

2

Nevertheless, there are still many elements in the retinal circuitry that we haven’t figured out yet, for example, how the brain translates signals into our picture of the world. What most of the cells in the inner retina actually do remains a mystery.

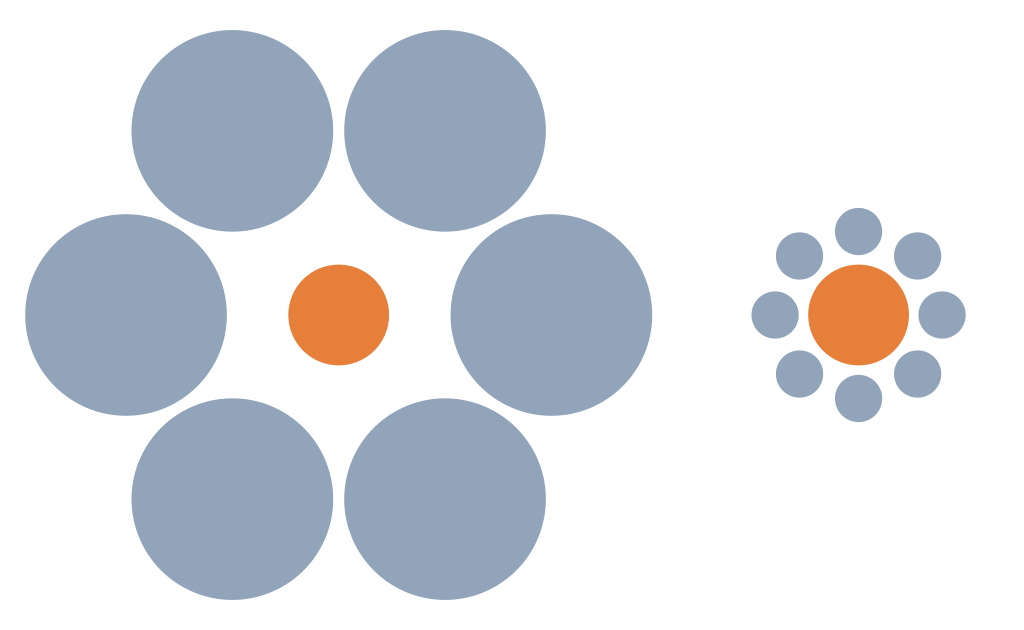

Once the signals from the eye begin their journey to the brain, things become even more complicated. The classical view says there are two streams of visual processing known as the dorsal and ventral or "what and where" streams. The ventral or "what" stream provides us with conscious recognition of the object and its details, but is slow and prone to illusions. The dorsal "where" stream, meanwhile, drives our actions towards an object, often quickly and subconsciously. This idea is supported by studies on patients with damage to areas involved in one stream but not the other, who may, for example, be able to see an object but not use it to guide motion. Some studies have also shown that while we fall for illusions such as the Titchener illusion (see below) when asked about them consciously, we use the actual (rather than illusory) size to drive movements.

More recent studies have raised questions about this distinction – showing that if visual tasks and those requiring actions are appropriately matched, there is no different in the size of the illusion seen. The dissociations seen in patients also seem to be less clear cut than originally thought. Other research has supported the idea that these processing pathways are less distinct than first thought, working together as well as separately. Studies in primates suggest there might even be three processing systems – one for shape, one for colour, and one for movement, location and spatial organisation.

Research has also indicated that visual processing might be intimately linked with audio processing. Observations of changes in ear canal pressure, which scientists think is the middle-ear muscles moving the eardrum, have been matched up with eye movements: when our eyes move to an object, so do our ears. Scientists have suggested that both motions are brain-directed, but it’s also possible that the eyes send a signal to the brain that then sends a message to the ears to follow, the opposite, or both in a big feedback loop. It’s thought that syncing the ears and eyes facilitates visual processing, because it helps us identify what is making what sound. However, the discovery is still new and there remains lots to find out about it.

Learn more about Synchronised ears and eyes.

2

2_-_lateral_view.png)

Whatever route it takes, information from the eyes is processed in the visual cortex, located in the occipital lobe at the back of the brain. The primary visual area (V1) is the first part of the cortex to receive information from the eye, which travels via the lateral geniculate nucleus. From here, the areas involved are harder to distinguish. We used to think that the information then travelled through various other areas, which processed increasingly more complicated elements – starting with edges and shapes, then moving on to colour, movement and areas that respond to attention. However, we now know that rather than a simple hierarchy, these areas connect in complex patterns – feeding back to lower areas as well as forward to higher ones. V1, for example, can be modulated by attention, something previously thought to only be taken into account by V4. There are also disagreements about the exact location and extent of each area. It may not be useful afterall to divide the visual cortex up to understand how this complex system works.

Vision Problems

It has long been thought that there is a link between reading or studying and vision problems, but recent research suggests the important factor is not how long children spend staring at something close, but how long they spend outside. The mechanism behind this is as yet unknown, but one idea is that plenty of bright, natural light is protective for the developing eye. Others argue that it is the viewing of landscapes and objects at distance that has the effect. The mechanisms behind these theories are yet to be confirmed.

Scientists are also looking into alternatives, such as bright, daylight-mimicing lamps for children in places where natural light is limited or where outdoor play is impossible.

Learn more about Myopia Boom.

Visual Illusions

One theory is that when we view objects like birds or clouds in the sky, those on the horizon are further away than those above us, so we make the same assumption about the moon, and our brain corrects its size based on distance, making it seem bigger. This is an example of size constancy. However when asked, few people consciously perceive the horizon moon as being further away, casting doubt on this interpretation. An alternative is that small objects like trees make it seem bigger by comparison. Interestingly, looking at the moon with your head upside-down (e.g. by bending over and looking at it through your legs) reduces the size of the illusion, though no-one knows why!

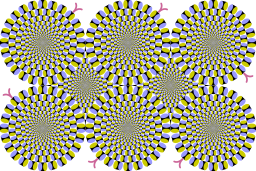

One suggestion for the mechanism is that areas with a greater difference in luminance (black and yellow or blue and white) are processed more quickly by the brain than those with more similar luminance. This difference in processing speed could create the illusion of motion. However this mechanism can’t explain other illusions that also produce illusory motion, so for now their mechanism remains a mystery.

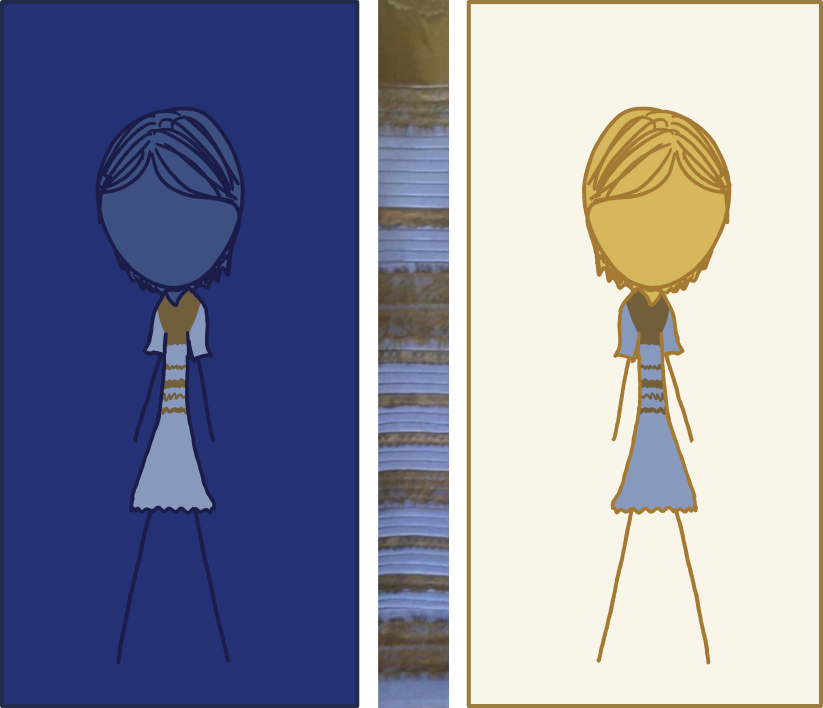

In February 2015, the internet went crazy over a picture of a bodycon dress with lace trim. Some saw a blue dress with black detail while others swore blind it was white with gold trim. So what was going on? How can an innocent picture cause such a divide? It was soon confirmed that the dress itself was blue and black, but in the image, the pixels were actually light blue and brown. One study of 1,400 people discovered that 57% saw it as blue and black, 30% saw it as white and gold, 11% saw it as light blue and brown[2].

Scientists think that the difference is down to what assumption your brain makes about lighting. You can't tell from the picture, so guess. Under cool daylight, the dress looks white/gold as you factor out the blue tinge as a lighting effect. Under incandescent light, it looks blue/black. Scientists discovered that they could change what colour people saw by giving them cues as to the kind of lighting involved.

Which of these camps you fall into could be down to a number of things. One suggestion is that larks who wake early and spend more waking hours in daylight, assume daylight more often, fitting with teh finding that this colour was more prevalent amongst women and the elderly. Others think it might be down to the yellowing of the corneas that happens with age, and some experts argue that neither of these suggestions fits, and there must be some other, as yet unknown factor involved.

Structural colour

Structural colour comes about when microstructural detail (and sometimes smaller) arises in things such as shells, butterfly wings, feathers, and beetle shells. These details have the ability to interact with light in ways that affect the colour we can see (e.g. reflection, refraction, diffraction). This means that although pigment may not be present, the material looks coloured. Recent discoveries of structural colour in unexpected materials have allowed scientists to guess at what colour dinosaurs were, and has led to the conclusion that it may be more common than previously believed. It is also possible that some things exhibit structural colour seen only by insects with UV vision.

Learn more about What colour were the dinosaurs?.

Animal Vision

There are many aspects of animal vision we don't understand, such as why some birds like chickens bob their heads as they walk. To find out more, researchers put pigeons on a treadmill, and found that the bobbing stops if their surroundings are stable. This suggests the birds are using the head movements to keep the appearance of a still world as they move – something we do via tiny, unconscious eye movements. Short bursts of head motion are easy for the brain to cancel out. This is similar to a technique used by dancers where they leave their head behind as they start a pirouette, then snapp it round at the last minute, so it is still for as long as possible.

Learn more about Bird Head Bobbing.

2

2

Sequencing photoreceptor proteins revealed wobbegongs (a group of shark species) only have one kind of colour detecting cone cell. However, other researchers have observed sharks attracted or repulsed by certain colours. One researcher trained a lemon shark to select coloured targets from a mixture of ones of the same brightness and has identified the presence of colour-sensitive cones in the eyes of several species. As evidence is conflicting, fundamentally, nobody knows whether or not sharks can see in colour.

Learn more about Whether sharks see colour.

2

2For years, researchers wondered how cephalopods – octopi and squid – could camouflage themselves by imitating the colours in nature if they were colour blind, as they appeared to be. Observational data that suggested they couldn’t pick out colours of a similar shade, although they could determine the difference between black and white or differences in polarised light[3][4]. They were also found to have only one visual pigment, or cone. However, more recent research on cuttlefish suggests that they may indeed be able to see colour, it’s just that their eyes don’t do it like ours do. Whilst our pupils are round, theirs are moon-shaped. Researchers reckon that this means they can use clever focussing techniques to focus their one cone on any one colour at a time – whichever takes their fancy. If correct, this means that they can see in colour – just only one at a time. This could explain observations of behaviour and camouflage.

2

2

Chameleons have long been thought to have independent eyes; unlike humans they can move them in different directions separately and seemingly randomly. This ability, called voluntary strabismus, was tested by getting chameleons to play a computer game, where they were shown a double image of an insect[5]. Each replicated insect moved in opposite directions and the chameleon tried to catch one with it’s tongue. Researchers found the chameleon would focus with one eye first on one image, before deciding to go for the insect. If the eyes were truly independent with regard to co-operation, they expected the other eye wouldn’t play a role. However, the second eye snapped round to focus on the same insect as the first eye. Scientists think that this means the eyes are, in fact, cooperative. This is like how our hands work. If our hands moved truly independently, tasks like typing would be impossible! But we can type one-handed, which looks just like our hands are independent.

Scientists think some sort of cross-talk occurs, where each eye knows what the other is doing; movements are coordinated, but individual. When a point of interest appears, they cooperate. This is one of the first examples of this behaviour to be studied comprehensively; we don't yet know whether this theory may be extrapolated to other species, e.g. bird binocular vision.

2

2Scientists are also interested in whether the mechanism for cooperative eye motion is innate or learnt.Exploration of the neural network behind the eyes that coordinates their motion suggests that motorneurons and specialised interneurons need to be trained and calibrated during infancy and probably throughout life in order to maintain the precise binocular coordination characteristic of primate eye movements despite growth, aging effects, and injuries to the eye movement neuromuscular system[6][7]. Malfunction of this network or its ability to adaptively learn may be a contributing cause of strabismus. Does this extend to chameleon vision? We don't yet know.

2

2We know that some insects such as bees can see in UV, but why can't we? Researchers looking at monotremes and marsupials examined their cone pigments and genes, and found functional genes that code for wavelengths in the near ultraviolet, along with non-functional genes in the actual ultraviolet range. They think this implies early mammals once saw in the UV. Humans with tritanopia – one kind of genetic colour blindness – also have a non-functional S cone. In humans, loss of this S cone could be linked to historical nocturnal behaviour but, interestingly, some animals retain all their S cones but are nocturnal.

Computer Vision

Researchers have attempted to replicate the muscle motion of the human eye using piezoelectric ceramics, which change in size slightly when electricity is passed through them[8]. This allows them to more closely imitate the movements of the human eye and design robots that are more intuitive.

2

2This article was written by the Things We Don’t Know editorial team, with contributions from Ed Trollope, Ginny Smith, Johanna Blee, Rowena Fletcher-Wood, Joshua Fleming, and Holly Godwin.

This article was first published on 2017-04-19 and was last updated on 2021-05-18.

References

why don’t all references have links?

[1] Tahnbee, K., Florentina, S., Kerschensteiner, D., (2015). An excitatory amacrine cell detects object motion and provides feature-selective input to ganglion cells in the mouse retina. eLife 4. doi: 10.7554/eLife.08025.

[2] Lafer-Sousa, R., Hermann, K,L., Conway, B,R. (2015). Striking individual differences in color perception uncovered by ‘the dress’ photograph. Current Biology, 25(13): R545-R546. doi: 10.1016/j.cub.2015.04.053.

[3] Hanlon, RT, and Messenger, JB (1996) Cephalopod Behaviour. Cambridge: Cambridge University Press.

[4] Hanlon, R. T., Messenger, J. B., & Bjorndal, K. A. (1998). A listing in this section does not necessarily preclude a later review. Cephalopod Behaviour. Reviews in Fish Biology and Fisheries, 8, 223.

[5] H. K. Katz et al. (2015). Eye movements in chameleons are not truly independent – evidence from simultaneous monocular tracking of two targets. Journal of Experimental Biology, 218(13): 2097-2105. doi: 10.1242/jeb.113084.

[6] W. M. King, and W. Zhou (2000). New ideas about binocular coordination of eye movements: is there a chameleon in the primate family tree?. The Anatomical Record: An Official Publication of the American Association of Anatomists, 261(4), 153-161. doi: 10.1002/1097-0185(20000815)261:4.

[7] W. M. King (2011). Binocular coordination of eye movements–Hering’s Law of equal innervation or uniocular control? European Journal of Neuroscience, 33(11), 2139-2146. doi: 10.1111/j.1460-9568.2011.07695.x.

[8] Schultz, J. and Ueda, J., (2013). Nested Piezoelectric Cellular Actuators for a Biologically Inspired Camera Positioning Mechanism. IEEE 29.5:1125–1138. doiI: 10.1109/TRO.2013.2264863.

Recent vision News

Get customised news updates on your homepage by subscribing to articles